- Blog

- Cities In Motion Download Pc

- Zemax Opticstudio Ver15 Sp1 13

- Wan Miniport Sterowniki Download

- Ohio Drivers License

- Bryce Pro V7 Rar

- Belajar Microsof Excel Akuntansi

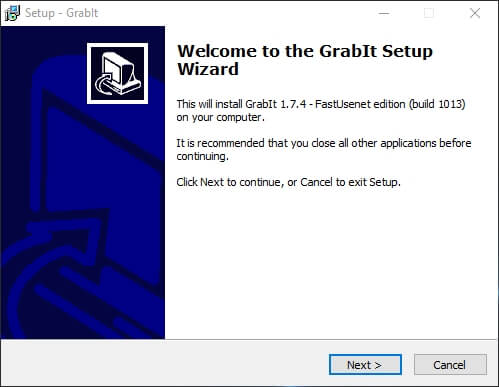

- How To Create Nzb File

- Open Port Not Confirmed

- Isi Dari Bill Of Quantity

- Wlop Gumroad Free

- Dwf To Dwg

- Igo Primo Windows Ce Free Download

- Active Webcam 11.6 Serial Key

- Camtasia Studio 8.0.4.1060 Licence Key

- Swiss Perfect 98 Help Me

- 50 Viera Plasma 1080p Hdtv

- Utorrent pro crack 3-4-7 42330

- Free memory cleaner for mac

- Word formatting marks 2013

- Gns3 vm install on esxi

- Getting started on youtube video editing software

- Microsoft word 2011 free trial download

- Schoolminder create shortcut get activex error

- Ibm afp printer driver for windows 7

- Indian movie commando full movie hd

- Mac torrent site reviews

- Vsphere client for mac

- Reddit best mac cleaner software

- Intel q35 express chipset graphics driver league of legends

- Make bootable stick yosemite mac os x

- Free mcafee internet security for 1 year

- Zen lite software

- The sims 4 custom content install guide

- Personalized motorcycle license plates michigan military

- Telltale game of thrones endings how many

- How to listen audible on mac

- The sims 4 skidrow torrent

- Best mkv player windows xp

- Preview pdf editor make text smaller

- Most recent apple update

An NZB file can be used to gather the contents of a news feed from a Usenet posting. The NZB file will do this by downloading headers that are specific to a user's search criteria rather than downloading all of the headers in a particular newsgroup. NZB files are saved in the XML file format. How can the answer be improved?

Been searching on google and might be that im searching for the wronge things.

How can i index a newsgroup server ?If i already have a list of nzb files, how hard is it to write a little command line tool that downloads them?

Poul K. SørensenPoul K. Sørensen8,4761111 gold badges9090 silver badges214214 bronze badges

1 Answer

A vague question.

A list of NZB files will not help you.

Indexing a newsgroup server means you will have to write a little library to download all the posts of the usenet server. Just the headers(topics) take a big amount of Gigabytes just to store. I believe you have to have several Terabytes available to be able to store just all the headers.

A little less storage hungry would be to just index the last X days, but might get big anyway.

Once you have all the post headers, you will have to use some logic to group together all the posts that would be one release of any kind (movie/program/ISO). For each group you create, you combine these to an NZB xml file which you can use for your favorite usenet downloader.

But if your question is that you have several URLs for NZB files you wish to download, there is a nice tool called webget that you can use to download any URL file.

Wolf5Wolf510.9k88 gold badges4747 silver badges5353 bronze badges

Not the answer you're looking for? Browse other questions tagged .netnewsgroup or ask your own question.

- Blog

- Cities In Motion Download Pc

- Zemax Opticstudio Ver15 Sp1 13

- Wan Miniport Sterowniki Download

- Ohio Drivers License

- Bryce Pro V7 Rar

- Belajar Microsof Excel Akuntansi

- How To Create Nzb File

- Open Port Not Confirmed

- Isi Dari Bill Of Quantity

- Wlop Gumroad Free

- Dwf To Dwg

- Igo Primo Windows Ce Free Download

- Active Webcam 11.6 Serial Key

- Camtasia Studio 8.0.4.1060 Licence Key

- Swiss Perfect 98 Help Me

- 50 Viera Plasma 1080p Hdtv

- Utorrent pro crack 3-4-7 42330

- Free memory cleaner for mac

- Word formatting marks 2013

- Gns3 vm install on esxi

- Getting started on youtube video editing software

- Microsoft word 2011 free trial download

- Schoolminder create shortcut get activex error

- Ibm afp printer driver for windows 7

- Indian movie commando full movie hd

- Mac torrent site reviews

- Vsphere client for mac

- Reddit best mac cleaner software

- Intel q35 express chipset graphics driver league of legends

- Make bootable stick yosemite mac os x

- Free mcafee internet security for 1 year

- Zen lite software

- The sims 4 custom content install guide

- Personalized motorcycle license plates michigan military

- Telltale game of thrones endings how many

- How to listen audible on mac

- The sims 4 skidrow torrent

- Best mkv player windows xp

- Preview pdf editor make text smaller

- Most recent apple update